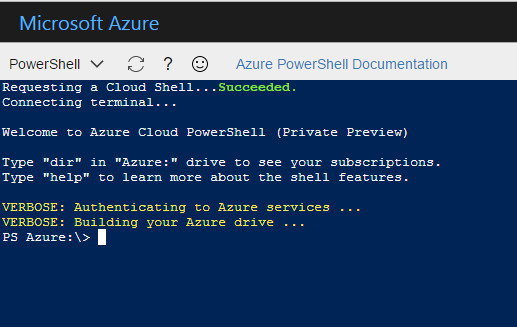

At Ignite earlier this week Microsoft announced the PSCloudShell preview would become publicly available to all Azure tenants. If you’re not aware of PSCloudShell, it is a fully-functional PowerShell console embedded in your Azure tenant’s web management portal. In PSCloudShell, your Azure subscriptions and all of their resources are easily browsable as a PowerShell drive. This Azure drive is made possible by a module called SHiPS.

What is SHiPS?

SHiPS is a new module that Microsoft has made available in PSCloudShell, and it gives PowerShell developers the ability to create PowerShell providers written in PowerShell. It exposes a set of classes that you can inherit to create functionality similar to providers written in C# or another .NET Framework language, but without all the mucky-muck. To achieve this it leverages an existing open source project called Simplex from Jim Christopher (AKA Beefarino) of CodeOwls.

Read more...